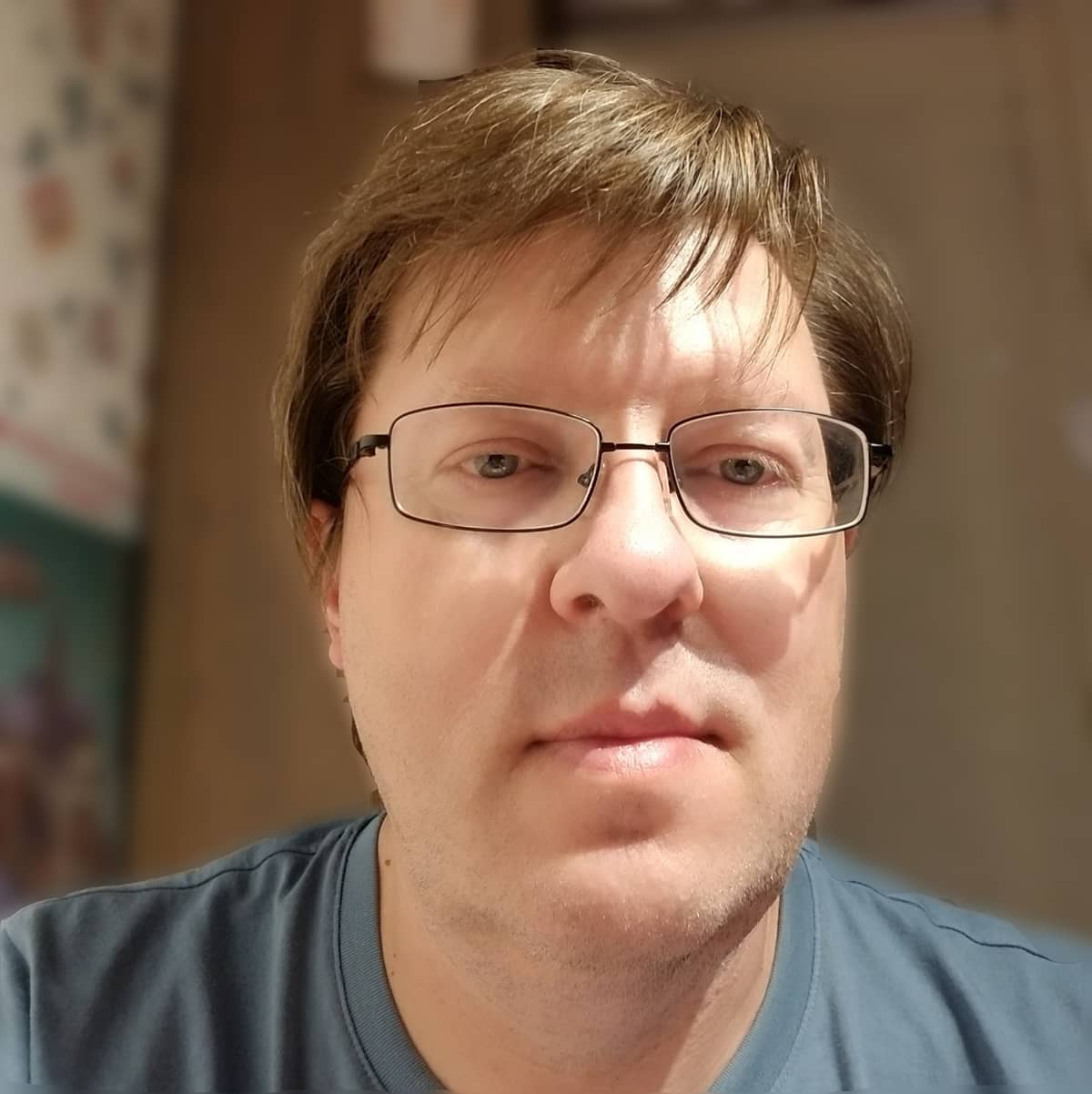

Author Spotlight

Dmitry Zinoviev

@aqsaqal

Today we’re putting our spotlight on Dmitry Zinoviev, author of Data Science Essentials in Python, Pythonic Programming, Complex Network Analysis in Python, and Resourceful Code Reuse. Pragmatic sat down and talked with Professor Zinoviev about everything Python.

This is also an AMA. Everyone commenting or asking a question will automatically be entered into our draw to win one of his books!

Without further ado…