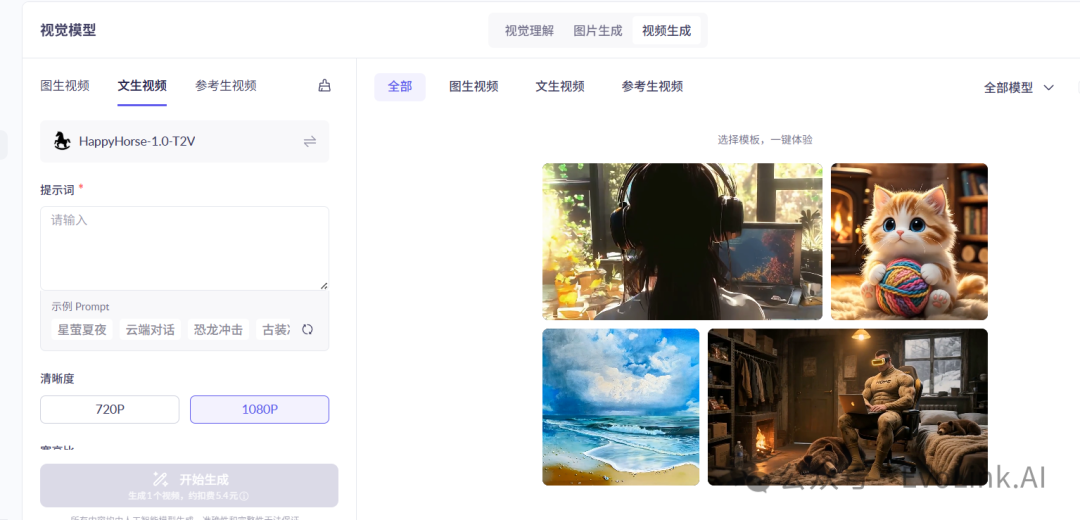

Alibaba just opened public API access for HappyHorse 1.0, the model currently ranked #1 on Video Arena’s blind tests.

What caught my attention isn’t only the ranking. It’s the shape of the API:

-

text-to-video

-

image-to-video

-

reference-to-video with up to 9 refs

-

natural-language video editing

And the pricing is simple enough to reason about: 0.9 RMB/sec at 720P, 1.6 RMB/sec at 1080P.

What I’m wondering is this:

Does controllability matter more than raw quality now?

The launch examples suggest HappyHorse is very good at following camera language and multi-shot structure. That feels more important for real products than one-off impressive outputs.

For example:

-

“Shot 1 / Shot 2 / Shot 3” prompt structure

-

explicit camera motion

-

style-first prompts for anime or cinematic looks

-

image-to-video workflows that preserve subject identity better than pure t2v

That sounds like a model designed for pipelines, not just experimentation.

I’m also curious whether teams here would prefer:

-

one strong general video endpoint, or

-

separate endpoints like HappyHorse has for t2v / i2v / r2v / edit

My current take: splitting the API by workflow is the right call. It reduces ambiguity and makes production integration easier.