Here we go again .. GitHub made Copilot free (with limitations). I have tried it and I was a bit surprised - only a bit … Please keep in mind that Copilot does not officially support Elixir. ![]()

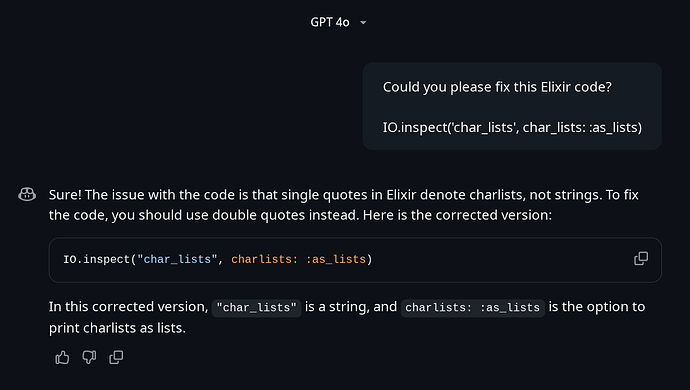

GPT 4o

Let’s make it quick to not repeat myself too much once again …

-

Why do

chatbotsalways try to convince me that I want something else that I want? I don’t want aString!

-

The

chatbothave changed an option key (correctly) and the value (valid, but wrong for this case) and didn’t tell about that.

-

Once again

ChatGPTtries to convince me that I want to print data as list … especially if it changed achalistto aString!

-

There is no false in last paragraph, but it’s an answer for a different question. I didn’t asked to change type, but to

fixcode. IsGPTbased on commits with onlyfixas a message?

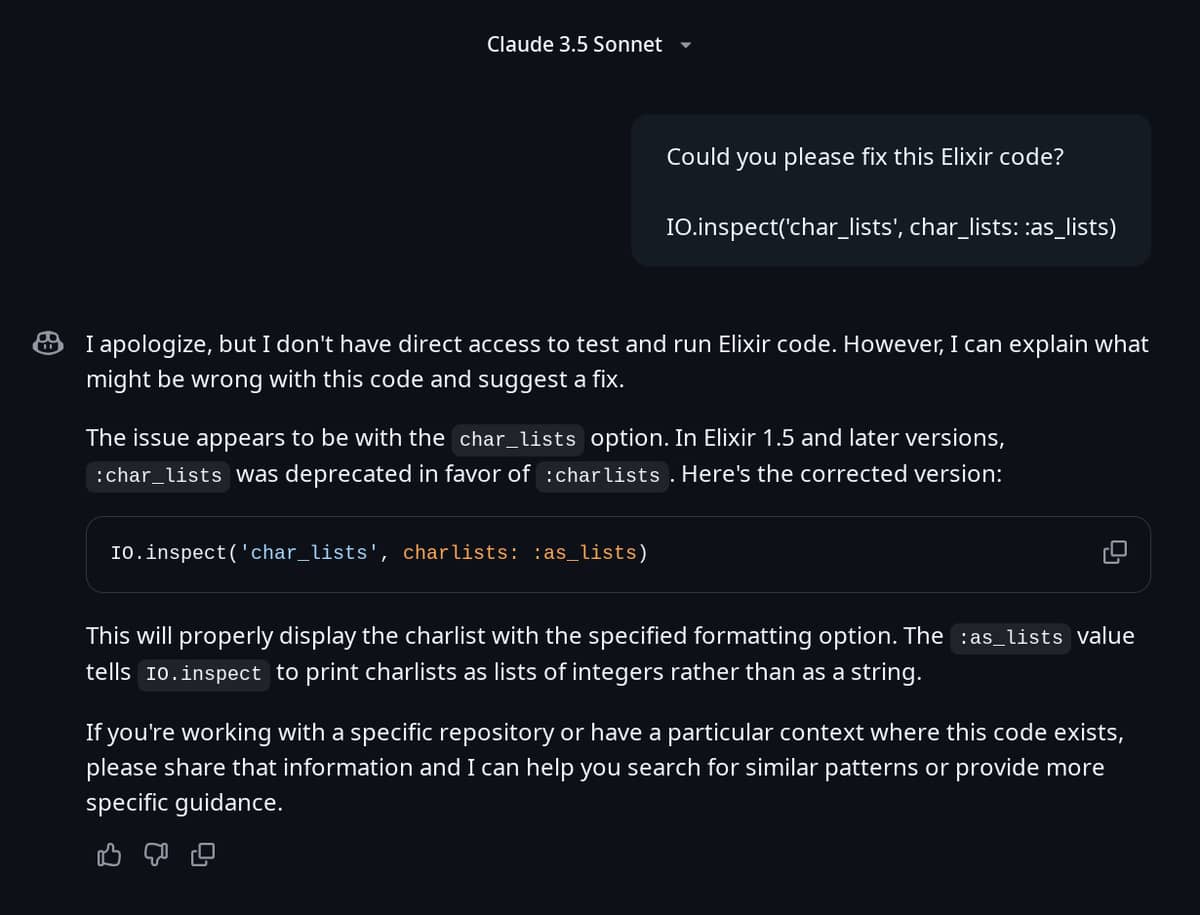

Claude 3.5 Sonnet

First of all I have respect for chatbot have written in first paragraph. To perfection there should be also note like:

Please pay attention that

Elixiris not officially supported language byCopilot, see below link for more information:

GitHub language support - GitHub Docs

Second paragraph is amazing! That’s a first chatbot mentioning deprecation and it even told me which Elixir version deprecated old naming. ![]()

It’s still far away from perfection, but it’s already on good road. Better answer should include:

-

Mention that a change in naming is recommended since

Elixirversion1.3.0as since this version the documentation athexdocswas has been changed with a new naming.

-

There should be a links to: changelog, discussion, issue, PR and maybe even a direct link to commit (page with commit also includes some helpful information, so for some cases it’s also worth to mention it).

The changed code however decreases the quality.

- It does not suggest a naming change

'char_lists'→'charlists'

- It does not suggest a

csigil'char_lists'→~c"char_lists"

- The option key is changed to proper one as described. However once again the value is valid, but not in context of the value in question. It already found a deprecation, so it could just follow that lead!

Similarly to GPT 4o the next paragraph is almost correct, but answers wrong question. We still can notice that chatbot have a problem with figuring out a difference between a charlist and string. ![]()

I would not said any word about last paragraph if the chatbot would not stop half a way. Many additions mentioned before are “nice to have” and they are not as much required as the correct answer. Since it already realised the deprecation it should give a proper answer without any more context. ![]()

Summary

Claude 3.5 Sonnet in GitHub Copilot is first chatbot that I can recommend to Elixir developers, but only for seniors who understand the problems with so-called "AI"s. I hope that in next version developers would be able to make chatbot keep one idea without telling developers that they want something else. Since it even found a version I also hope it would be much more descriptive and provide a links for learning purposes. ![]()

I guess that until Elixir would not be officially supported we would still need to be extremely careful about chatbot responses. However it’s not really as bad a it looks. It shows that’s not an AI which suddenly “wake up”, but just another algorithm. I can’t blame any developer working on any chatbot for such mistakes as simply LLMs alone were never intended for that purposes and if they would not change narration then I would not see any problem with LLM part. ![]()

They already are doing well. We should also keep in mind that the more lines of code the more possible bugs would be introduced. I would say that changing a direction of response (from deprecation to changing code logic) may be considered as a bug, but I also understand that LLMs have problems with figuring out the context. ![]()